Biography

Kaidong Chai is a Master’s student in Computer Science at the University of Massachusetts Amherst. He received his Bachelor’s degrees in Computer Science and Mathematics from the University of Massachusetts Amherst in 2022. He has engaged in research related to massive data generation and processing, human pose estimation, and educational technology. His research interests include computer vision, machine learning, and distributed systems.

- Machine Learning

- Computer Vision

- Distributed System

M.S. in Computer Science, 2024

University of Massachusetts Amherst

B.S. in Computer Science, 2022

University of Massachusetts Amherst

B.S. in Mathematics, 2022

University of Massachusetts Amherst

Experience

Featured Publications

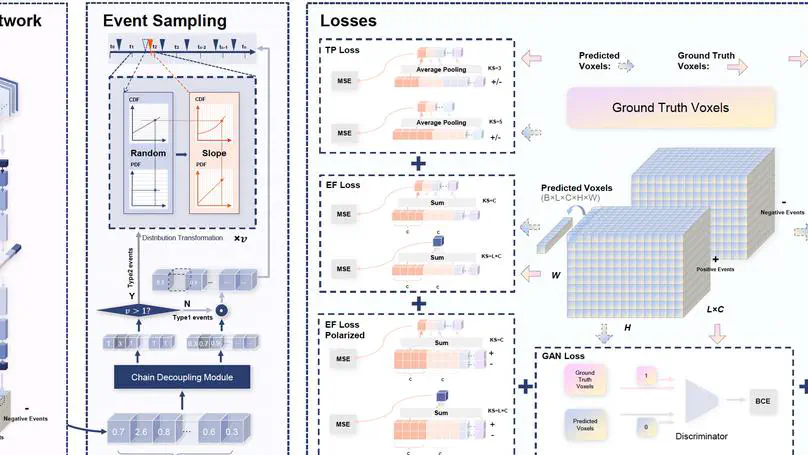

Dynamic Vision Sensor (DVS)-based solutions have recently garnered significant interest across various computer vision tasks, offering notable benefits in terms of dynamic range, temporal resolution, and inference speed. However, as a relatively nascent vision sensor compared to Active Pixel Sensor (APS) devices such as RGB cameras, DVS suffers from a dearth of ample labeled datasets. Prior efforts to convert APS data into events often grapple with issues such as a considerable domain shift from real events, the absence of quantified validation, and layering problems within the time axis. In this paper, we present a novel method for video-to-events stream conversion from multiple perspectives, considering the specific characteristics of DVS. A series of carefully designed losses helps enhance the quality of generated event voxels significantly. We also propose a novel local dynamic-aware timestamp inference strategy to accurately recover event timestamps from event voxels in a continuous fashion and eliminate the temporal layering problem. Results from rigorous validation through quantified metrics at all stages of the pipeline establish our method unquestionably as the current state-of-the-art (SOTA).

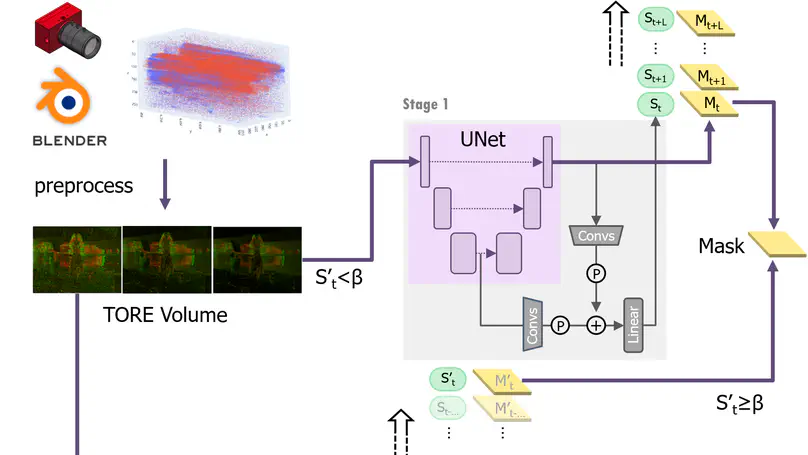

Technology-mediated dance experiences, as a medium of entertainment, are a key element in both traditional and virtual reality-based gaming platforms. These platforms predominantly depend on unobtrusive and continuous human pose estimation as a means of capturing input. Current solutions primarily employ RGB or RGB-Depth cameras for dance gaming applications; however, the former is hindered by low-light conditions due to motion blur and reduced sensitivity, while the latter exhibits excessive power consumption, diminished frame rates, and restricted operational distance. Boasting ultra-low latency, energy efficiency, and a wide dynamic range, neuromorphic cameras present a viable solution to surmount these limitations. Here, we introduce YeLan, a neuromorphic camera-driven, three-dimensional, high-frequency human pose estimation (HPE) system capable of withstanding low-light environments and dynamic backgrounds. We have compiled the first-ever neuromorphic camera dance HPE dataset and devised a fully adaptable motion-to-event, physics-conscious simulator. YeLan surpasses baseline models under strenuous conditions and exhibits resilience against varying clothing types, background motion, viewing angles, occlusions, and lighting fluctuations.